Category: Lifestyle

How we choose to live our lives and do with our time. The pleasure of democratic freedom.

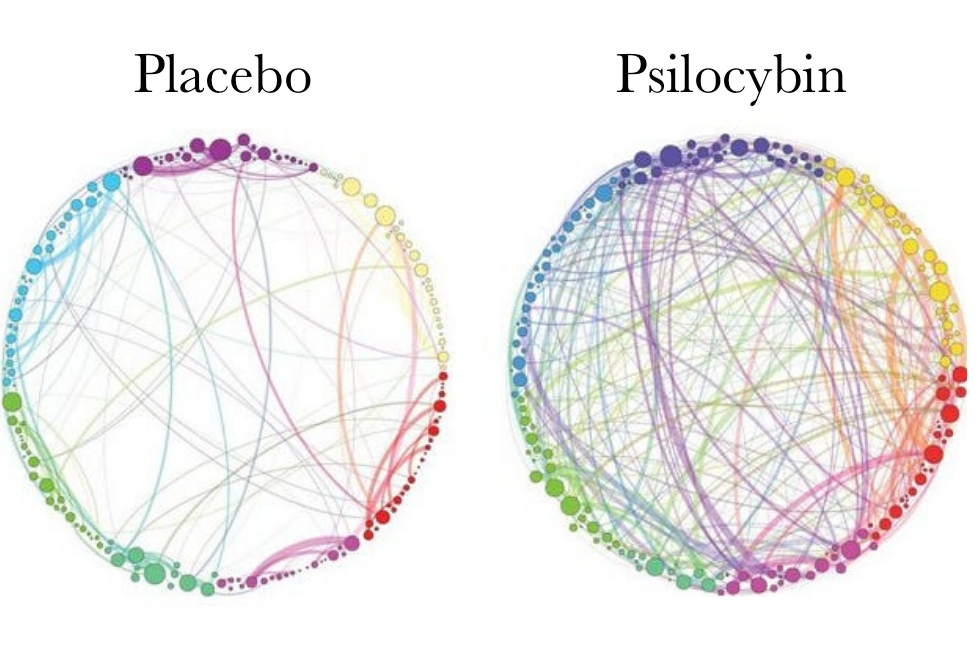

Psychedelic Silver Bullets

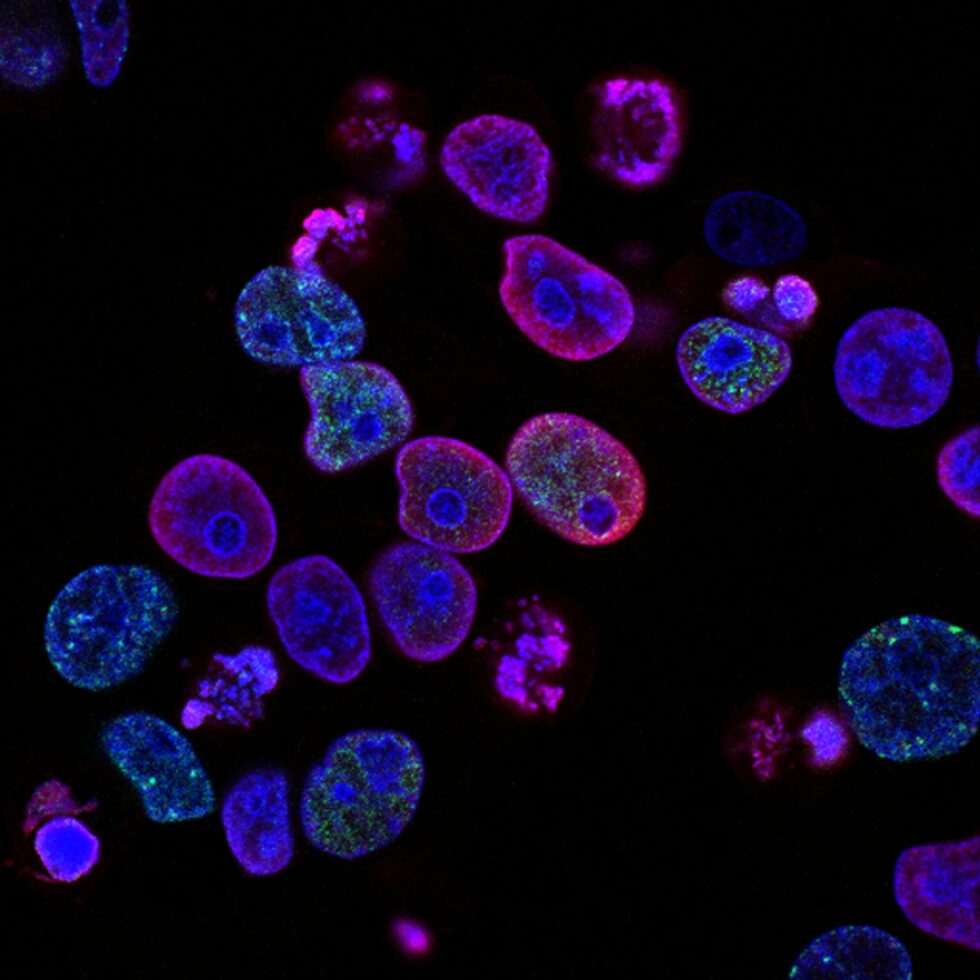

Can biotechnology end suffering?

What is the character of UK innovation policy?

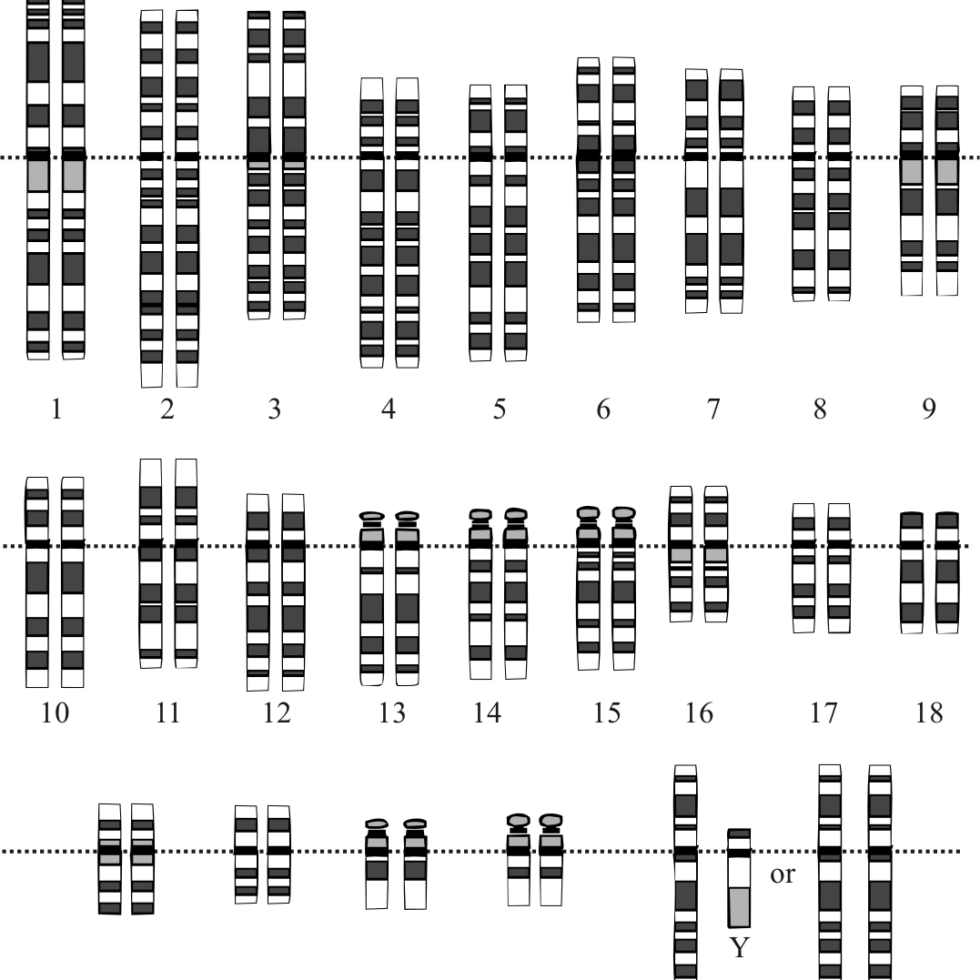

On Eugenics Today and Its ‘Scientific’ Refutations

Drone Kills Libyan Rebel Autonomously: Why ‘Killer Robots’ Need A Ban

Does it still make sense to read science-philosophy classics?

Does Tech Worsen Inequality or Solve Inequality?

Everyone Should Sing

The Best Science Fiction Short Story

This Is What To Eat Each Day